Gaywallet (they/it)

I’m gay

- 16 Posts

- 18 Comments

1·24 hours ago

1·24 hours agoAbsolutely, thank you!

24·3 days ago

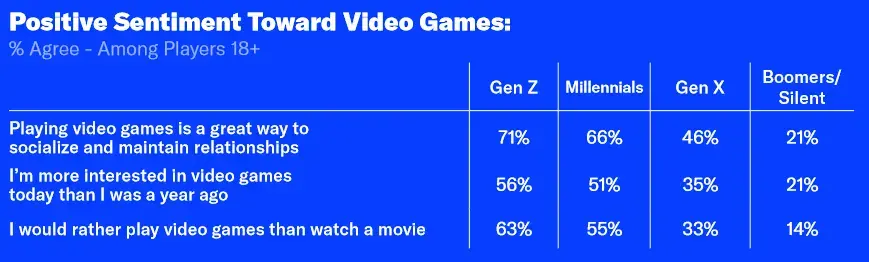

24·3 days agoFor those that are curious, here’s the exact questions used and the %s by demographic

Generally speaking I’d also fall into the rather play games category, but it really depends on the context. Unfortunately there aren’t too many couch co-op kind of games anymore so if the goal is to spend time with someone playing a video game doesn’t often work great.

1·6 days ago

1·6 days agooof, big flaw there

6·6 days ago

6·6 days agoAny information humanity has ever preserved in any format is worthless

It’s like this person only just discovered science, lol. Has this person never realized that bias is a thing? There’s a reason we learn to cite our sources, because people need the context of what bias is being shown. Entire civilizations have been erased by people who conquered them, do you really think they didn’t re-write the history of who these people are? Has this person never followed scientific advancement, where people test and validate that results can be reproduced?

Humans are absolutely gonna human. The author is right to realize that a single source holds a lot less factual accuracy than many sources, but it’s catastrophizing to call it worthless and it ignores how additional information can add to or detract from a particular claim- so long as we examine the biases present in the creation of said information resources.

5·8 days ago

5·8 days agoCheers for this, found two games that seem interesting that I never heard about before!

5·10 days ago

5·10 days agoThis isn’t just about GPT, of note in the article, one example:

The AI assistant conducted a Breast Imaging Reporting and Data System (BI-RADS) assessment on each scan. Researchers knew beforehand which mammograms had cancer but set up the AI to provide an incorrect answer for a subset of the scans. When the AI provided an incorrect result, researchers found inexperienced and moderately experienced radiologists dropped their cancer-detecting accuracy from around 80% to about 22%. Very experienced radiologists’ accuracy dropped from nearly 80% to 45%.

In this case, researchers manually spoiled the results of a non-generative AI designed to highlight areas of interest. Being presented with incorrect information reduced the accuracy of the radiologist. This kind of bias/issue is important to highlight and is of critical importance when we talk about when and how to ethically introduce any form of computerized assistance in healthcare.

4·10 days ago

4·10 days agoah yes, i forgot that this article was written specifically to address you and only you

8·13 days ago

8·13 days agoI appreciate your warning, and would like to echo it, from a safety perspective.

I would also like to point out that we should be approaching this, as every risk, from a harm reduction standpoint. A drug with impurities that could save your life or prevent serious harm is better than no drug and death. People need to be empowered to make the best decisions they can, given the available resources and education.

13·14 days ago

13·14 days agoVenus rhymes with a piece of anatomy often found on men. Obviously they got it backwards

4·21 days ago

4·21 days agoBeen thinking about picking this one up

10·21 days ago

10·21 days agoIt’s FUCKING OBVIOUS

What is obvious to you is not always obvious to others. There are already countless examples of AI being used to do things like sort through applicants for jobs, who gets audited for child protective services, and who can get a visa for a country.

But it’s also more insidious than that, because the far reaching implications of this bias often cannot be predicted. For example, excluding all gender data from training ended up making sexism worse in this real world example of financial lending assisted by AI and the same was true for apple’s credit card and we even have full-blown articles showing how the removal of data can actually reinforce bias indicating that it’s not just what material is used to train the model but what data is not used or explicitly removed.

This is so much more complicated than “this is obvious” and there’s a lot of signs pointing towards the need for regulation around AI and ML models being used in places it really matters, such as decision making, until we understand it a lot better.

10·22 days ago

10·22 days agobig weird flex but okay vibes except actually not okay

3·22 days ago

3·22 days agoLike most science press releases I’m not holding my breath

6·22 days ago

6·22 days agoGame changer for smart watches if this turns out to work and scale well

0·1 month ago

0·1 month agoI think she wants you to just rack up a bunch of medical debt so she can cancel it, gotta think in loopholes like the big companies

0·1 year ago

0·1 year agoWe can’t edit other people’s titles and this is a good article, but I wanted to don my mod hat for a second to mention that this title is sensationalized, and we would appreciate it if you leave the original article title in tact in the future.

probably not, in the same way that your grandma calls a video chat a facetime or your representative might call the internet a series of tubes

AI is the default word for any kind of machine magic now